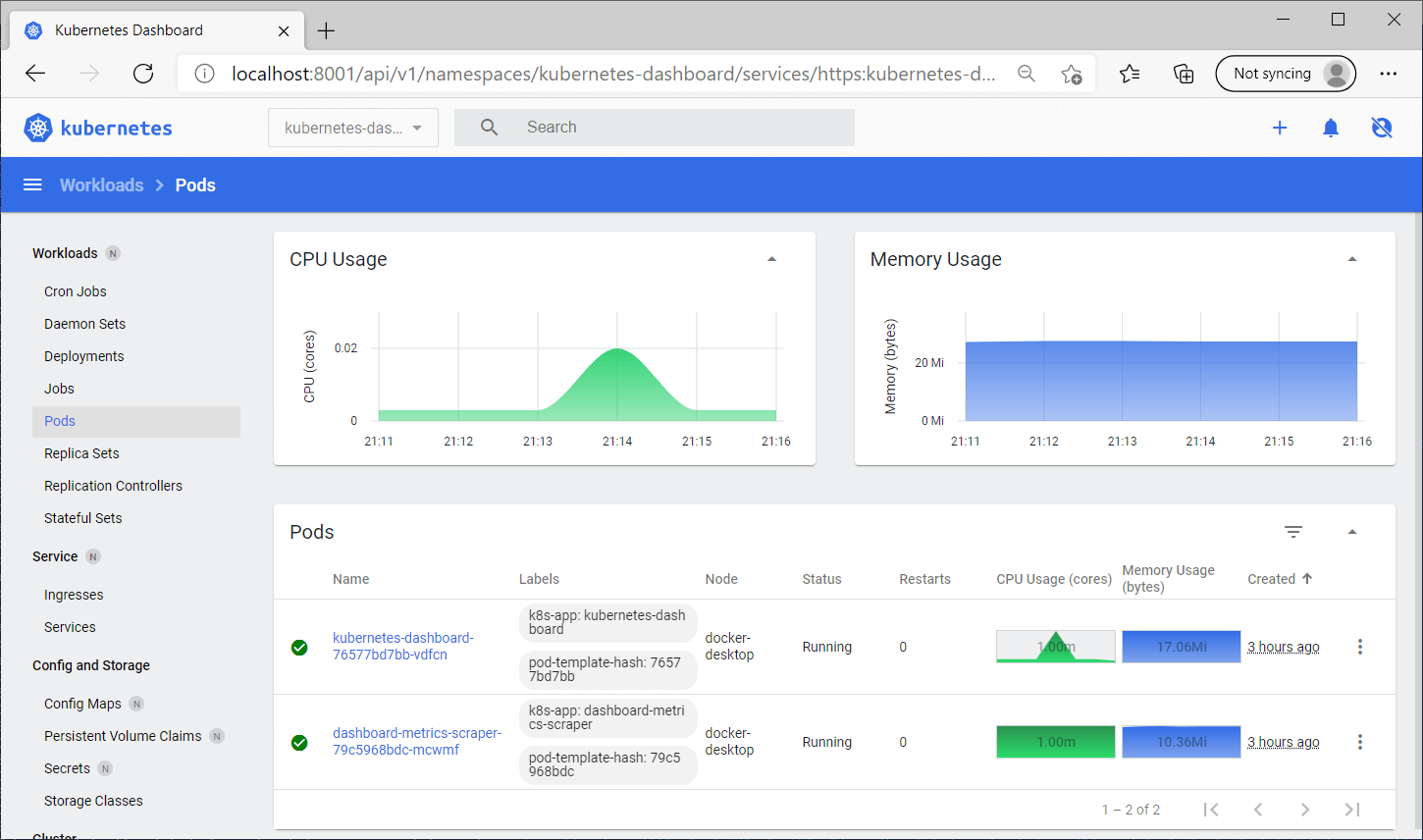

T13:49:43.865470598+00:00 stdout F /docker-entrypoint.sh: Looking for shell scripts in /docker-entrypoint.d/ T13:49:43.865470598+00:00 stdout F /docker-entrypoint.sh: /docker-entrypoint.d/ is not empty, will attempt to perform configuration The log also has different format, it's follow standard kubernetes log prefix, I think. The access and error log has been placed into 1 file. rw- 1 root root 4.1M Jan 6 15:54 just 1 log for the standard nginx container/pod. The file also get merged between access.log (stdout) and error.log (stderr). So the log actually have some additional information and format. However, I don't know if that will be easy to setup, since the logs come from containers that running on top kubernetes. If there's more best practice to do this, or any help and suggestions would be greatly appreciated! Example: Parse logs in the Common Log Format | Elasticsearch Guide | Elastic.Hints based autodiscover | Filebeat Reference | Elastic.However, if that's possible to be done in filebeat using pre-define modulle/processor it would be better. I have considered for post-processing with Logstash. Is that possible to do something like this? -> -> ( to process specific field with antother filebeat module) -> ->. However, the custom filter/grok actually is not what's expected here, since the filebeat itself has many of built-in module (that include pipeline/filter), i.e: nginx. I see a there's "dissect" processor that can be used to add custom enrich for specific field (Like we can use with logstash). However, from the docs I can assume that it can process event with pre-defined/supported "processor": event -> processor 1 -> event1 -> processor 2 -> event2. So it can be used for performing additional processing and decoding. performing additional processing and decoding enhancing events with additional metadata. The document said that: The libbeat library provides processors for: Filter and enhance data with processors| Filebeat Reference.I've read some possible feature realated to "processor" in the filebeat. Since the "message" field is exactly match the filebeat module for NGINX. However, In the image example, I have specified Apps/Pod that running NGINX and I want to process the "message" field with NGINX filebeat module. Elasticsearch Synthetics browser monitoring in kubernetes or containers – Justin Lim on New elastic kubernetes script – deploy-elastick8s.Kubernetes logs has been successfully parsed.JK on ESXi on old hardware Error 10 – Out of resources failed to malloc MMIO.Neil O'connell on racadm quick dirty cheatsheet.New elastic kubernetes script – deploy-elastick8s.sh.ESXi on old hardware Error 10 – Out of resources failed to malloc MMIO.TROUBLESHOOT swiss-army knife container image.Upgrading stack, fleet, elastic-agents in k8s running with ECK.Elasticsearch Synthetics browser monitoring in kubernetes or containers.2 6 7 agent beats CA centos certificate cloud container dell deploy docker docker-compose eck elastic elastic-agent elasticsearch elastic stack Ethan fail2ban filebeat fleet k8s kibana kubectl kubernetes linux logstash metricbeat minikube minio monitoring openssl pod redhat rhel server sles ssl stack systemd tls unix yum Recent Posts

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed